Artificial Intelligence (AI) is no longer a futuristic concept. It’s already here, powering chatbots, recommendation engines, fraud detection systems, healthcare tools, smart factories, and business automation. From startups to global enterprises, AI applications are shaping how decisions are made and how systems operate.

But here’s the critical question many people overlook: How secure are these AI systems?

Cybersecurity for AI applications is not optional. AI systems process massive amounts of sensitive data, learn from inputs, and make automated decisions. If they are not protected, the consequences can be severe, data breaches, manipulated outcomes, biased decisions, financial loss, and reputational damage.

This guide is written in clear, simple English for developers, business leaders, AI enthusiasts, and non-technical professionals who work with or rely on AI systems. You don’t need to be a cybersecurity expert to understand this article. Think of cybersecurity for AI like protecting the brain of a smart machine, if someone tampers with it, everything goes wrong.

Let’s explore why cybersecurity training for AI applications is essential, what risks exist, and what kind of training you actually need.

1. Why Cybersecurity Matters for AI Applications

AI systems are powerful because they:

- Process large volumes of data

- Learn from patterns

- Automate decisions

- Operate continuously

But this power also makes them attractive targets. A single vulnerability in an AI application can affect thousands, or millions, of users instantly.

Cybersecurity for AI is about trust. If users don’t trust AI systems to be safe, fair, and reliable, adoption collapses. Proper cybersecurity training ensures AI systems remain dependable and secure.

2. What Makes AI Systems Different from Traditional Software

Traditional software follows fixed rules. AI systems learn and adapt.

This difference introduces new risks:

- AI models can be manipulated

- Training data can be poisoned

- Outputs can be influenced by attackers

- Decisions may be hard to explain

Securing AI is not just about protecting code, it’s about protecting data, models, and decision logic.

3. Who Needs Cybersecurity Training for AI

Cybersecurity training for AI applications is not just for security teams. It’s essential for:

- AI engineers and data scientists

- Software developers

- Product managers

- Business leaders using AI tools

- Compliance and risk teams

- Startup founders building AI products

If you build, deploy, manage, or rely on AI, you need basic AI cybersecurity awareness.

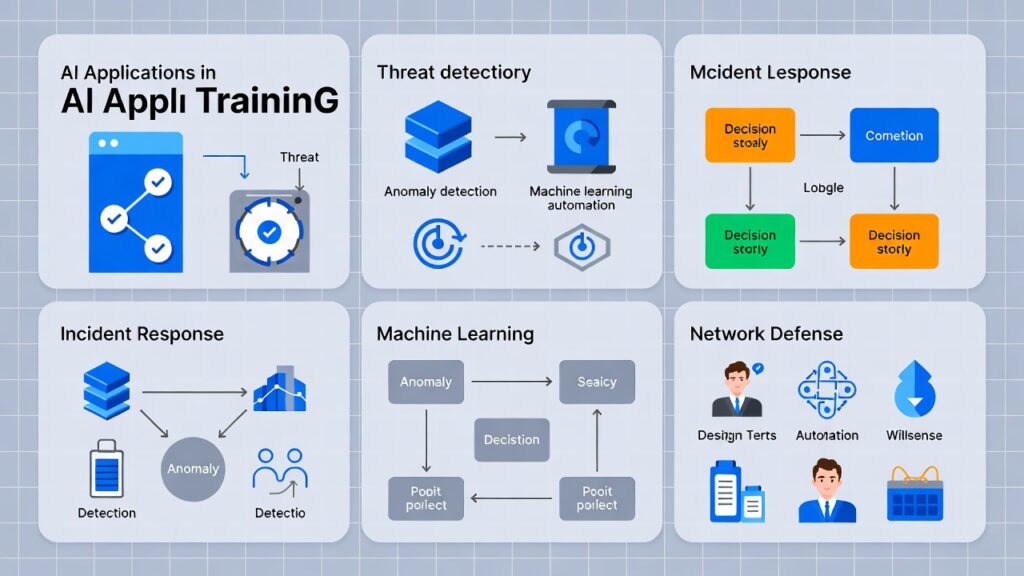

4. Common Cyber Threats Targeting AI Applications

AI systems face both traditional and AI-specific threats, including:

- Data breaches – theft of training or user data

- Model theft – stealing proprietary AI models

- Data poisoning – injecting malicious data into training sets

- Adversarial attacks – manipulating inputs to fool AI

- API abuse – exploiting AI endpoints

Training helps teams recognize these threats early, before damage occurs.

5. Data Security: The Foundation of Secure AI

AI systems are only as good, and as safe, as their data.

Cybersecurity training emphasizes:

- Secure data collection

- Data encryption

- Access controls

- Data integrity checks

- Secure storage

If data is compromised, AI outputs become unreliable. Securing data is like protecting the fuel that powers the engine.

Read Related Blog: Best Pre-Recorded Cybersecurity Course Online for Beginners

6. AI Model Manipulation and Poisoning Risks

One unique AI risk is model poisoning. Attackers subtly modify training data so the AI learns incorrect or biased behavior.

Examples include:

- Fraud detection models missing real fraud

- Recommendation systems promoting harmful content

- Facial recognition misidentifying individuals

Cybersecurity training teaches how to:

- Validate data sources

- Monitor model behavior

- Detect anomalies

- Control training pipelines

7. Securing AI Pipelines and Workflows

AI pipelines include:

- Data ingestion

- Preprocessing

- Model training

- Deployment

- Monitoring

Each step introduces potential vulnerabilities. Training ensures teams understand where attacks can happen and how to secure each stage.

A secure AI pipeline is like a secured factory line, every checkpoint matters.

8. Access Control and Identity Management in AI Systems

Who can access AI models, datasets, and systems?

Poor access control leads to:

- Unauthorized model changes

- Data leaks

- Insider threats

Cybersecurity training covers:

- Role-based access control

- Least privilege principles

- Secure authentication

- Monitoring user activity

Access control is often the simplest, and most overlooked, security measure.

9. Cloud Security for AI Applications

Most AI applications run in the cloud. While cloud platforms are powerful, misconfigurations are a major risk.

Training helps teams understand:

- Shared responsibility models

- Secure cloud storage

- Identity and access management

- Monitoring and logging

Cloud security mistakes are like leaving the office door open at night, avoidable with awareness.

10. API Security in AI-Powered Systems

AI applications often expose APIs for:

- Predictions

- Data input

- Integrations

Unsecured APIs are a common attack vector.

Cybersecurity training teaches:

- API authentication

- Rate limiting

- Input validation

- Monitoring for abuse

APIs are doors, training ensures they’re locked and monitored.

Explore our Latest Topic: Short Cybersecurity Training Modules for Busy Professionals

11. Privacy Risks in AI and How to Reduce Them

AI systems often process personal or sensitive data.

Training covers:

- Data minimization

- Anonymization techniques

- Secure data sharing

- Privacy-by-design principles

These practices align with guidance from trusted global authorities like the Cybersecurity & Infrastructure Security Agency (CISA), a credible external source on cybersecurity best practices.

12. Ethical AI and Security Awareness

Security and ethics go hand in hand.

Cybersecurity training for AI includes awareness of:

- Bias risks

- Fairness concerns

- Transparency

- Responsible AI use

A secure AI system that produces harmful or biased outcomes still fails. Training ensures teams consider both safety and responsibility.

13. Secure AI Development Lifecycle (AI-SDLC)

Just like software has an SDLC, AI needs a Secure AI Development Lifecycle.

Training introduces:

- Security from design stage

- Secure coding practices

- Testing AI models for abuse

- Continuous monitoring

Security added later is expensive. Security built in from the start is efficient.

14. Cybersecurity Training Topics for AI Teams

Effective AI cybersecurity training typically covers:

- AI threat awareness

- Data security fundamentals

- Model protection techniques

- Cloud and API security

- Incident response basics

Training doesn’t need to be overly technical, it needs to be relevant and practical.

15. Non-Technical AI Security Awareness for Business Users

Business leaders and users also need AI cybersecurity awareness.

Training helps them:

- Ask the right security questions

- Understand AI risks

- Make informed decisions

- Avoid blind trust in AI outputs

AI should support decisions, not replace human judgment.

16. How Cybersecurity Training Reduces AI Risks

Cybersecurity training reduces risk by:

- Preventing human errors

- Improving early threat detection

- Strengthening processes

- Building a security-first culture

Most AI security failures happen due to lack of awareness, not lack of tools.

17. How Safelora Supports Cybersecurity Training for AI

Platforms like Safelora provide accessible cybersecurity training designed for modern technologies, including AI applications.

Safelora focuses on:

- Practical cybersecurity education

- Beginner-to-intermediate learning paths

- Real-world AI security awareness

- Flexible online training

This makes it easier for teams and individuals to build AI security knowledge without complexity.

18. The Future of Cybersecurity in AI Applications

AI will continue to grow and so will attacks targeting it.

The future will demand:

- Continuous cybersecurity training

- Cross-functional awareness

- Security embedded into AI design

- Strong collaboration between AI and security teams

Cybersecurity for AI is not a one-time effort, it’s an ongoing commitment.

Conclusion

Cybersecurity for AI applications is no longer optional, it’s essential. AI systems influence decisions, automate processes, and handle sensitive data. Without proper security training, even the most advanced AI can become a liability.

The good news? You don’t need to be a cybersecurity expert to get started. With the right training, awareness, and habits, individuals and organizations can protect their AI systems effectively.

AI is powerful, but only when it’s secure, trustworthy, and responsibly managed.

Frequently Asked Questions (FAQs)

1. Is cybersecurity training necessary for AI applications?

Yes. AI systems face unique risks that require specific security awareness.

2. Do AI developers need cybersecurity knowledge?

Absolutely. Secure AI starts with informed developers and data scientists.

3. Are AI systems more vulnerable than traditional software?

They can be, especially due to data and model manipulation risks.

4. Can non-technical professionals benefit from AI cybersecurity training?

Yes. Awareness helps business users make safer decisions with AI tools.

5. Is online cybersecurity training effective for AI security?

Yes. Online training offers flexible, practical learning suited for fast-evolving AI environments.